Problems define markets

- Having a more accurate understanding of the market in which your product is really competing

- A market is not tied to any specific solutions that meet those needs. That is why you see “market disruptions”: when a new type of product (solution space) better meets the market needs (problem space)

- The “what” describes the benefits that the product should give the customer—what the product will accomplish for the user or allow the user to accomplish.

- The “how” is the way in which the product delivers the “what” to the customer. The “how” is the design of the product and the specific technology used to implement the product. “What” is problem space and “how” is solution space.

How = solution space

Lean product teams articulate the hypotheses they have made and solicit customer feedback on early design ideas to test those hypotheses.

Should you listen to customers?

It’s true that customers aren’t going to lead you to the Promised Land of a break-through innovative product, but customer feedback is like a flashlight in the night: it keeps you from falling off a cliff as you try to find your way there.

Using the solution space to discover the problem space

- Hard for customers to talk about abstract benefits and the relative importance of each—and when they do, it’s often fraught with inaccuracies

- This can be solved by techniques like "contextual inquiry" or "customer discovery"

- The reality is that customers are much better at giving you feedback in the solution space. If you show them a new product or design, they can tell you what they like and don’t like. They can compare it to other solutions and identify pros and cons.

- The best problem space learning often comes from feedback you receive from customers on the solution space artifacts you have created

Divergent and convergent thinking

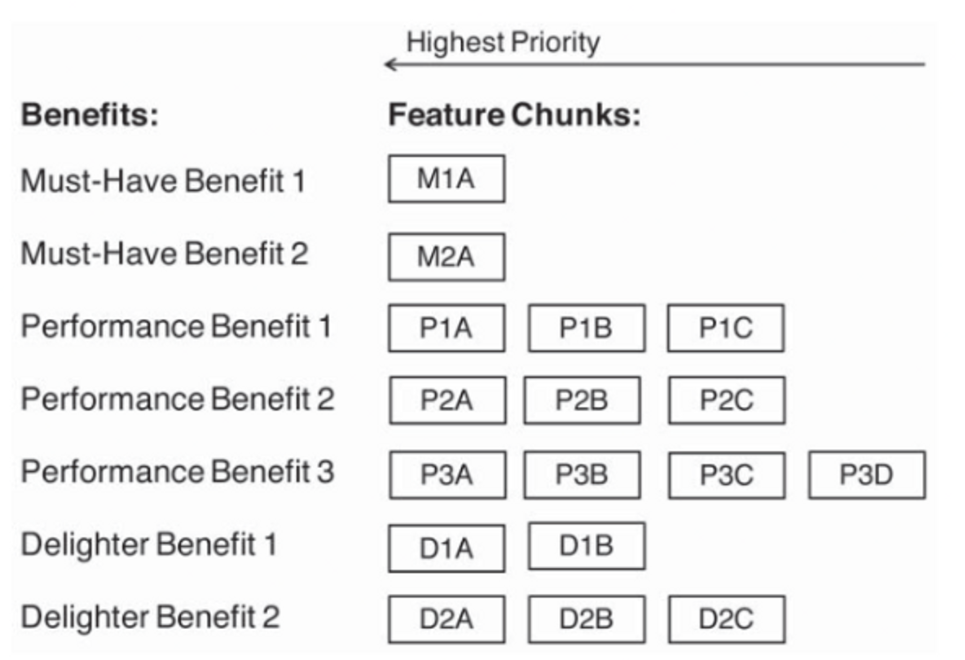

You should be practicing divergent thinking, which means trying to generate as many ideas as possible without any judgment or evalua-tion. There will be plenty of time later for convergent thinking, where you evaluate the ideas and decide which ones you think are the most promising.

- you want to capture all the ideas that your team generated, then organize them by the benefit that they deliver.

- Then, for each benefit, you want to review and prioritize the list of feature ideas.

- You can score each idea on expected customer value to determine a first-pass priority.

- The goal is to identify the top three to five features for each benefit.

- There is not much value in looking beyond those top features right now because things will change—a lot—after you show your prototype to customers.

A user story is a brief description of the benefit that the particular functionality should provide, including whom the benefit is for (the target customer), and why the customer wants the benefit.

eg : As a professional photographer,I want to easily upload pictures from my camera to my website,so that I can quickly show my clients their pictures.

I - independent of other stories

N - not explicit and must be flexible, open for discussion

V - valuable to customer

E - reasonlaby estimate scope

S - small

T - testable

Small tickets and smaller ticket batch sizes are better

- Break tickets into atomic chunks. Trim extra stuff

- The batch size is the number of products worked on together at the same time in parallel. Faster velocity of small batches -> faster feedback -> reduce risk and waste

- The longer you work on a product with-out getting customer feedback, the more you risk a major disconnect that subsequently requires significant rework.

Scoping with story points

- Story points - type of currency for estimating relative ticket size

- Have a max threshold for story points

- Good operating principle - break down stories with high points into smaller stories

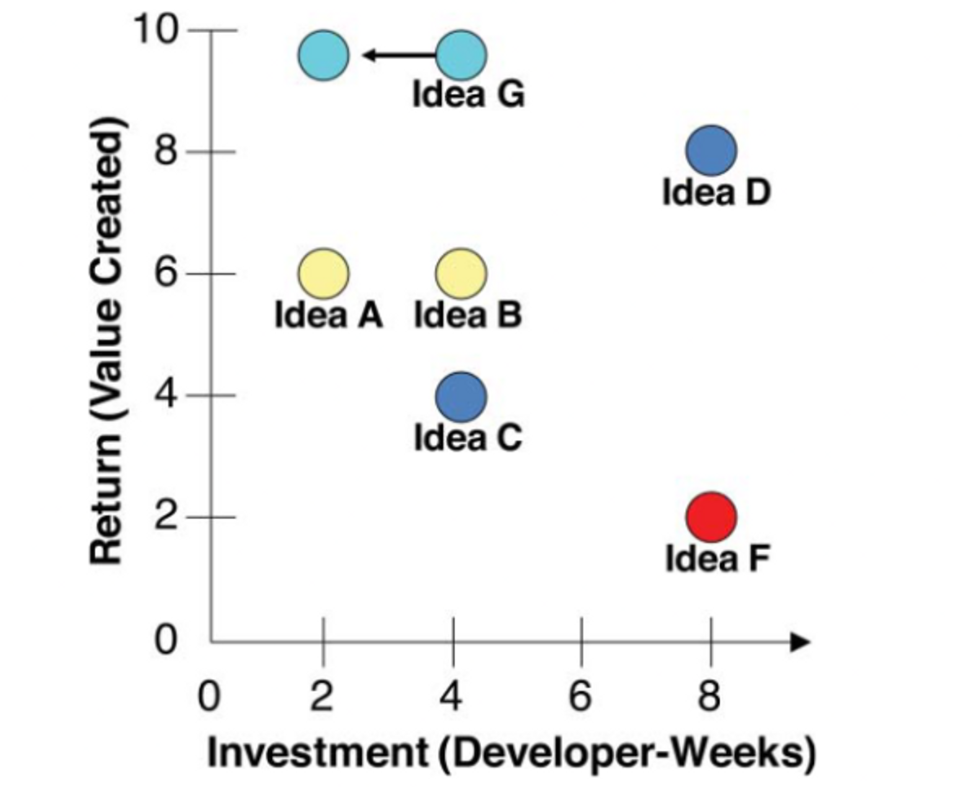

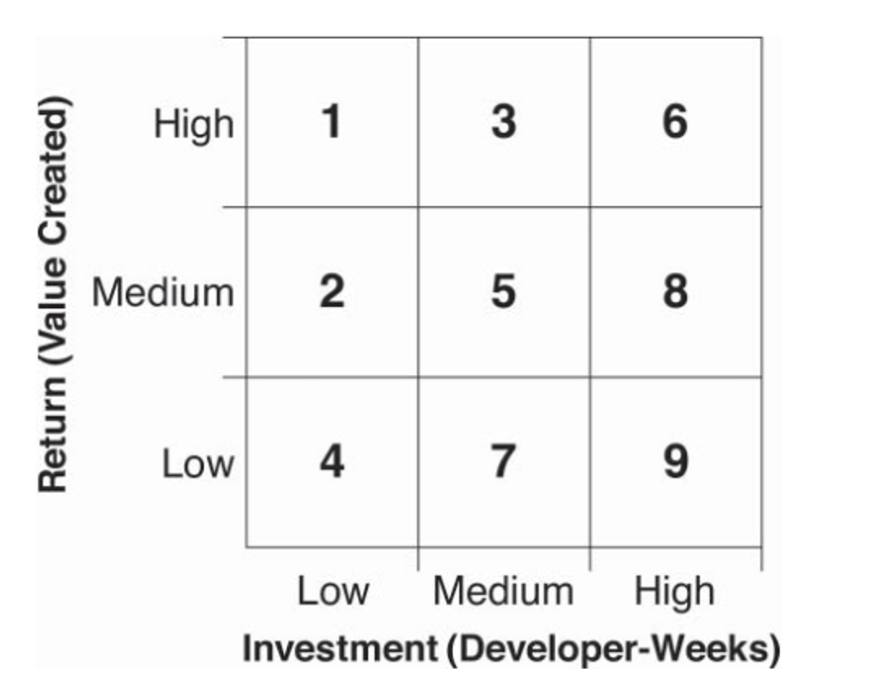

Return on investment to prioritize

- When you are building a product or feature, the investment is usually the time that your development resources spend working on it, which you generally measure in units such as developer-weeks (one developer working for one week).

- It’s true that you could probably calculate an equivalent dollar amount, but people use units like developer-weeks because they are simpler and clearer

Visualizing ROI

- Good product teams strive to come up with ideas like idea G in Figure 6.1—the ones that create high customer value for low effort.

- Great product teams are able to take ideas like that, break them down into chunks, trim off less valuable pieces, and identify creative ways to deliver the customer value with less effort than initially scoped—indicated in the figure by moving idea G to the left.

- The accuracy of the estimates should be proportional to the fidelity of the product definition

- The main point of these calculations is less about figuring out actual ROI values and more about how they compare to each other

Approximating ROI and Deciding on MVP candidate

Just comparing feature importance wrt one another. Once that’s done, arrange all features in this format as per benefit categories

- Intentionally give them generic names so that you can more easily envision replacing them with what would be relevant for your product. “M1A” means feature chunk A for must-have 1. “P2B” means feature chunk B for perfor-mance benefit 2, and “D2C” means feature chunk C for delighter benefit 21.

- Decide on the MINIMUM SET OF FUNCTIONALITY that will resonate with TG users

- Look down the leftmost column of feature chunks and determine which ones you think need to be in your MVP candidate. While doing so, you should refer to your product value proposition

- After this, focus on the main performance benefit you’re planning to use to beat the competition

- Delighters are part of your differentiation, too. You should include your top delighter in your MVP candidate.

So if you’ve made tentative plans beyond your MVP, you must be prepared to throw them out the window and come up with new plans based on what you learn from customers.

Moving on to MVP and early Prototyping

Use the broad term MVP “prototype” to capture the wide range of items you can test with cus-tomers to gain learning. While the first “prototype” you test could be your live MVP, you can gain faster learning with fewer resources by testing your hypotheses before you build your MVP.

What is/isn't an MVP?

- There has been spirited debate over what qualifies as an MVP. Some people argue vehemently that a landing page is a valid MVP. Others say it isn’t, insisting that an MVP must be a real, working product or at least an interactive prototype.

- The way I resolve this dichotomy is to realize that these are all methods to test the hypotheses behind your MVP. By using the term “MVP tests” instead of MVP, the debate goes away

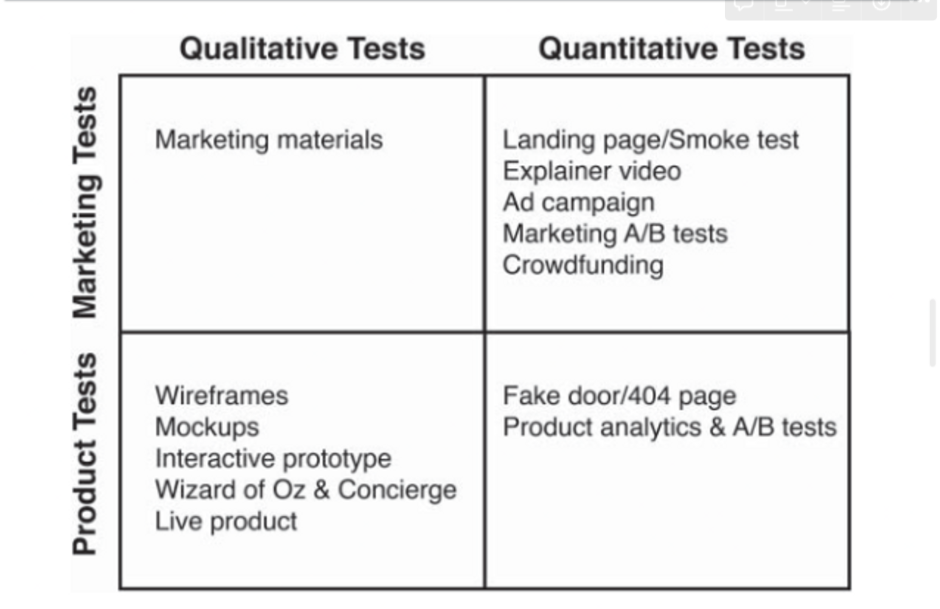

Product vs Marketing MVP Tests

- Marketing test - You’re simply describing the functionality to prospective customers to see how compelling they find your description

- MVP Tests - validate your product will involve showing prospective customers functionality to solicit their feedback on it. You may be showing them a live beta product or just low fidelity wireframes to assess product-market fit

- Marketing tests can provide valuable learning, but they’re not an actual product that creates customer value. At some point, you need to test a prototype of your MVP candidate

Quantitative vs Qualitative MVP Tests

Qualitative - you are talking with customers directly, usually in small numbers that don’t yield statistical significance eg: If, for example, you conducted one-on-one feedback sessions with 12 prospective customers to solicit their feedback on a mockup of your landing page, then that would be qualitative research

Quantitative - conducting the test at scale with alarge number of customers. You don’t care as much about any indi-vidual result and are instead interested in the aggregate results.

eg: If you launched two versions of your landing page and directed thousands of customers to each one to see which one had the higher conversion rate, then that would be a quantitative test.

Quant = what, how; Qual = why

Quantitative tests are good for learning “what” and “how many”: what actions customers took and how many customers took an action (e.g., clicked on the “sign up” button). But quantitative tests will not tell you why they chose to do so or why the other customers chose not to do so. In contrast, qualitative tests are good for learning “why”: the reasons behind different customers’ decisions to take an action or not.

Matrix of MVP Tests